7.7 Extracting and saving responses

Chapter 7-Fine-tuning to follow instructions

7.7 Extracting and saving responses

-

在本节中,我们保存测试集响应以便在下一节中评分,除此之外保存模型的副本以供将来使用。

-

首先,让我们简单看看finetuned模型生成的响应

torch.manual_seed(123)for entry in test_data[:3]:input_text = format_input(entry)token_ids = generate(model=model,idx=text_to_token_ids(input_text, tokenizer).to(device),max_new_tokens=256,context_size=BASE_CONFIG["context_length"],eos_id=50256)generated_text = token_ids_to_text(token_ids, tokenizer)response_text = (generated_text[len(input_text):].replace("### Response:", "").strip() )print(input_text)print(f"\nCorrect response:\n>> {entry['output']}")print(f"\nModel response:\n>> {response_text.strip()}")print("-------------------------------------")"""输出""" Below is an instruction that describes a task. Write a response that appropriately completes the request.### Instruction: Rewrite the sentence using a simile.### Input: The car is very fast.Correct response: >> The car is as fast as lightning.Model response: >> The car is as fast as a cheetah. ------------------------------------- Below is an instruction that describes a task. Write a response that appropriately completes the request.### Instruction: What type of cloud is typically associated with thunderstorms?Correct response: >> The type of cloud typically associated with thunderstorms is cumulonimbus.Model response: >> The type of cloud associated with thunderstorms is a cumulus cloud. ------------------------------------- Below is an instruction that describes a task. Write a response that appropriately completes the request.### Instruction: Name the author of 'Pride and Prejudice'.Correct response: >> Jane Austen.Model response: >> The author of 'Pride and Prejudice' is Jane Austen. -------------------------------------正如我们根据测试集指令、给定响应和模型响应所看到的,模型的性能相对较好,第一个和最后一个说明的答案显然是正确的,第二个答案很接近;模型用“cumulus cloud”而不是“cumulonimbus”来回答(但是,请注意前者可以发展成后者,后者能够产生thunderstorms)

-

最重要的是,我们可以看到模型评估不像上一章我们只需要计算正确垃圾邮件/非垃圾邮件类别标签的百分比即可获得分类准确性的那样简单。

-

在实践中,聊天机器人等instruction-finetunedLLM通过多种方法进行评估

- 短答案和多项选择基准,例如MMLU(“测量大规模多任务语言理解”,[https://arxiv.org/pdf/2009.03300](https://arxiv.org/pdf/2009.03300)),用于测试模型的知识

- 与其他LLM的人类偏好比较,例如LMSYS聊天机器人竞技场([https://arena.lmsys.org](https://arena.lmsys.org))

- 自动会话基准测试,其中使用另一个LLM(如GPT-4)来评估响应,例如AlpackaEval([https://tatsu-lab.github.io/alpaca_eval/](https://tatsu-lab.github.io/alpaca_eval/))

-

在下一节中,我们将使用类似于AlpackaEval的方法,并使用另一个LLM来评估我们模型的响应;但是,我们将使用我们自己的测试集,而不是使用公开可用的基准数据集。为此,我们将模型响应添加到“test_data”字典中,并将其保存为“instruction-data-with-response. json”文件以进行记录保存,以便我们可以在需要时在单独的Python会话中加载和分析它

from tqdm import tqdmfor i, entry in tqdm(enumerate(test_data), total=len(test_data)):input_text = format_input(entry)token_ids = generate(model=model,idx=text_to_token_ids(input_text, tokenizer).to(device),max_new_tokens=256,context_size=BASE_CONFIG["context_length"],eos_id=50256)generated_text = token_ids_to_text(token_ids, tokenizer)response_text = generated_text[len(input_text):].replace("### Response:", "").strip()test_data[i]["model_response"] = response_textwith open("instruction-data-with-response.json", "w") as file:json.dump(test_data, file, indent=4) # "indent" for pretty-printing"""输出""" 100%|██████████| 110/110 [00:59<00:00, 1.86it/s]检查其中一个条目,看看响应是否已正确添加到“test_data”字典中

print(test_data[0])"""输出""" {'instruction': 'Rewrite the sentence using a simile.', 'input': 'The car is very fast.', 'output': 'The car is as fast as lightning.', 'model_response': 'The car is as fast as a cheetah.'}可以看到原始的output和模型的model_response都在

-

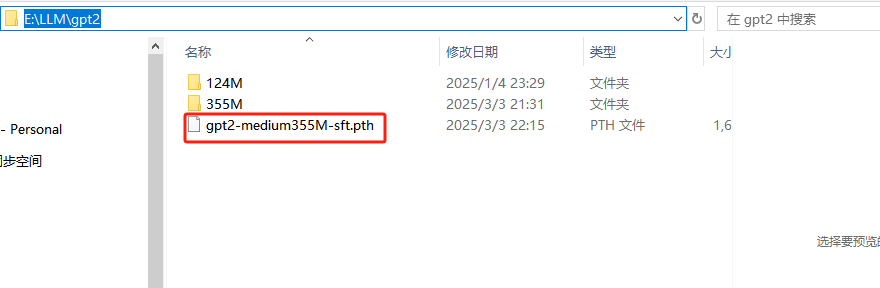

最后我们保存微调后的模型以供将来用

import refile_name = f"E:\\LLM\\gpt2\\{re.sub(r'[ ()]', '', CHOOSE_MODEL) }-sft.pth" torch.save(model.state_dict(), file_name) print(f"Model saved as {file_name}")# Load model via # model.load_state_dict(torch.load("E:\\LLM\\gpt2\\gpt2-medium355M-sft.pth"))"""输出""" Model saved as E:\LLM\gpt2\gpt2-medium355M-sft.pth