第G7周:Semi-Supervised GAN 理论与实战

>- **🍨 本文为[🔗365天深度学习训练营](https://mp.weixin.qq.com/s/0dvHCaOoFnW8SCp3JpzKxg) 中的学习记录博客**

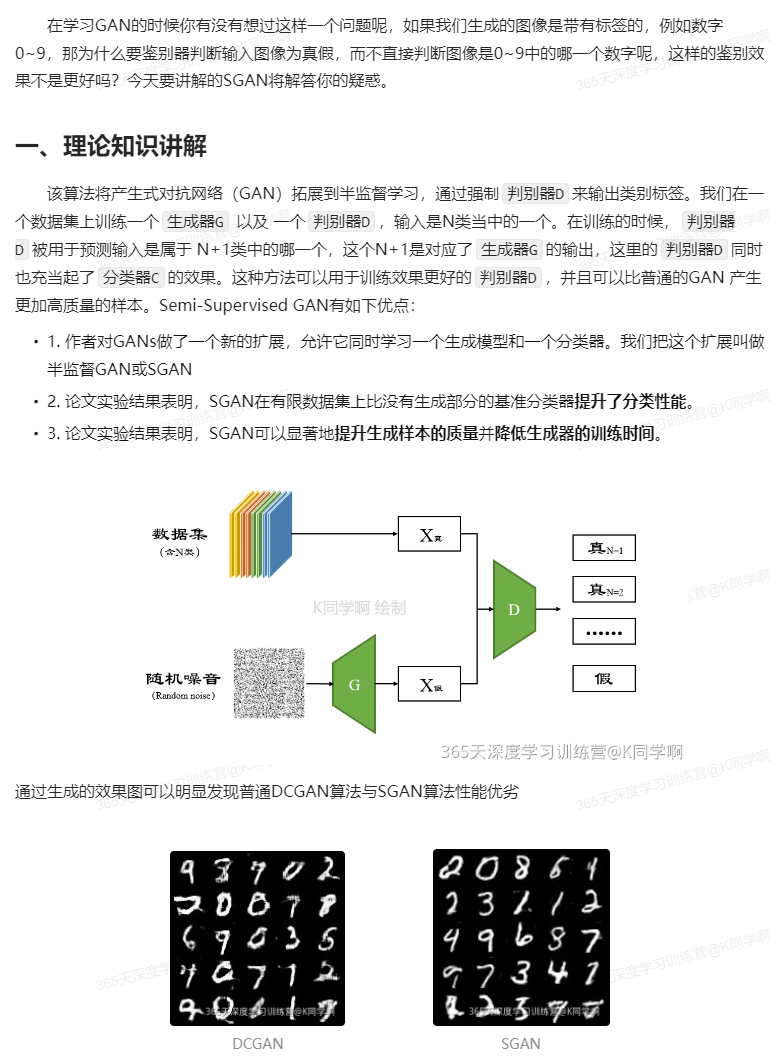

>- **🍖 原作者:[K同学啊](https://mtyjkh.blog.csdn.net/)**

import argparse

import os

import numpy as np

import mathimport torchvision.transforms as transforms

from torchvision.utils import save_imagefrom torch.utils.data import DataLoader

from torchvision import datasets

from torch.autograd import Variableimport torch.nn as nn

import torch.nn.functional as F

import torch# 创建保存生成图像的文件夹

os.makedirs("images", exist_ok=True)# 使用 argparse 解析命令行参数

parser = argparse.ArgumentParser()

parser.add_argument("--n_epochs", type=int, default=50, help="训练的轮数")

parser.add_argument("--batch_size", type=int, default=64, help="每个批次的样本数量")

parser.add_argument("--lr", type=float, default=0.0002, help="Adam 优化器的学习率")

parser.add_argument("--b1", type=float, default=0.5, help="Adam 优化器的第一个动量衰减参数")

parser.add_argument("--b2", type=float, default=0.999, help="Adam 优化器的第二个动量衰减参数")

parser.add_argument("--n_cpu", type=int, default=8, help="用于批次生成的 CPU 线程数")

parser.add_argument("--latent_dim", type=int, default=100, help="潜在空间的维度")

parser.add_argument("--num_classes", type=int, default=10, help="数据集的类别数")

parser.add_argument("--img_size", type=int, default=32, help="每个图像的尺寸(高度和宽度相等)")

parser.add_argument("--channels", type=int, default=1, help="图像的通道数(灰度图像通道数为 1)")

parser.add_argument("--sample_interval", type=int, default=400, help="图像采样间隔")

# opt = parser.parse_args() #如果有这行代码,项目不适合用jupyter notebook运行

opt = parser.parse_args([]) #有这行代码,项目才适合在jupyter notebook运行,就是多加了'[]'

print(opt)# 如果 GPU 可用,则使用 CUDA 加速

cuda = True if torch.cuda.is_available() else FalseNamespace(n_epochs=50, batch_size=64, lr=0.0002, b1=0.5, b2=0.999, n_cpu=8, latent_dim=100, num_classes=10, img_size=32, channels=1, sample_interval=400)

def weights_init_normal(m):classname = m.__class__.__name__if classname.find("Conv") != -1:torch.nn.init.normal_(m.weight.data, 0.0, 0.02)elif classname.find("BatchNorm") != -1:torch.nn.init.normal_(m.weight.data, 1.0, 0.02)torch.nn.init.constant_(m.bias.data, 0.0)

import torch.nn as nnclass Generator(nn.Module):def __init__(self):super(Generator, self).__init__()# 创建一个标签嵌入层,用于将条件标签映射到潜在空间self.label_emb = nn.Embedding(opt.num_classes, opt.latent_dim)# 初始化图像尺寸,用于上采样之前self.init_size = opt.img_size // 4 # Initial size before upsampling# 第一个全连接层,将随机噪声映射到合适的维度self.l1 = nn.Sequential(nn.Linear(opt.latent_dim, 128 * self.init_size ** 2))# 生成器的卷积块self.conv_blocks = nn.Sequential(nn.BatchNorm2d(128),nn.Upsample(scale_factor=2),nn.Conv2d(128, 128, 3, stride=1, padding=1),nn.BatchNorm2d(128, 0.8),nn.LeakyReLU(0.2, inplace=True),nn.Upsample(scale_factor=2),nn.Conv2d(128, 64, 3, stride=1, padding=1),nn.BatchNorm2d(64, 0.8),nn.LeakyReLU(0.2, inplace=True),nn.Conv2d(64, opt.channels, 3, stride=1, padding=1),nn.Tanh(),)def forward(self, noise):out = self.l1(noise)out = out.view(out.shape[0], 128, self.init_size, self.init_size)img = self.conv_blocks(out)return imgclass Discriminator(nn.Module):def __init__(self):super(Discriminator, self).__init__()def discriminator_block(in_filters, out_filters, bn=True):"""返回每个鉴别器块的层"""block = [nn.Conv2d(in_filters, out_filters, 3, 2, 1), nn.LeakyReLU(0.2, inplace=True), nn.Dropout2d(0.25)]if bn:block.append(nn.BatchNorm2d(out_filters, 0.8))return block# 鉴别器的卷积块self.conv_blocks = nn.Sequential(*discriminator_block(opt.channels, 16, bn=False),*discriminator_block(16, 32),*discriminator_block(32, 64),*discriminator_block(64, 128),)# 下采样图像的高度和宽度ds_size = opt.img_size // 2 ** 4# 输出层self.adv_layer = nn.Sequential(nn.Linear(128 * ds_size ** 2, 1), nn.Sigmoid()) # 用于鉴别真假的输出层self.aux_layer = nn.Sequential(nn.Linear(128 * ds_size ** 2, opt.num_classes + 1), nn.Softmax()) # 用于鉴别类别的输出层def forward(self, img):out = self.conv_blocks(img)out = out.view(out.shape[0], -1)validity = self.adv_layer(out)label = self.aux_layer(out)return validity, label

# 定义损失函数

adversarial_loss = torch.nn.BCELoss() # 二元交叉熵损失,用于对抗训练

auxiliary_loss = torch.nn.CrossEntropyLoss() # 交叉熵损失,用于辅助分类# 初始化生成器和鉴别器

generator = Generator() # 创建生成器实例

discriminator = Discriminator() # 创建鉴别器实例# 如果使用GPU,将模型和损失函数移至GPU上

if cuda:generator.cuda()discriminator.cuda()adversarial_loss.cuda()auxiliary_loss.cuda()# 初始化模型权重

generator.apply(weights_init_normal) # 初始化生成器的权重

discriminator.apply(weights_init_normal) # 初始化鉴别器的权重# 配置数据加载器

os.makedirs("./paper", exist_ok=True) # 创建存储MNIST数据集的文件夹

dataloader = torch.utils.data.DataLoader(datasets.MNIST("./paper",train=True,download=True,transform=transforms.Compose([transforms.Resize(opt.img_size), transforms.ToTensor(), transforms.Normalize([0.5], [0.5])]),),batch_size=opt.batch_size,shuffle=True,

)# 优化器

optimizer_G = torch.optim.Adam(generator.parameters(), lr=opt.lr, betas=(opt.b1, opt.b2)) # 生成器的优化器

optimizer_D = torch.optim.Adam(discriminator.parameters(), lr=opt.lr, betas=(opt.b1, opt.b2)) # 鉴别器的优化器# 根据是否使用GPU选择数据类型

FloatTensor = torch.cuda.FloatTensor if cuda else torch.FloatTensor

LongTensor = torch.cuda.LongTensor if cuda else torch.LongTensor

100%|█████████████████████████████████████████████████████████████████████████████| 9.91M/9.91M [00:02<00:00, 3.36MB/s] 100%|██████████████████████████████████████████████████████████████████████████████| 28.9k/28.9k [00:00<00:00, 206kB/s] 100%|█████████████████████████████████████████████████████████████████████████████| 1.65M/1.65M [00:00<00:00, 1.78MB/s] 100%|█████████████████████████████████████████████████████████████████████████████| 4.54k/4.54k [00:00<00:00, 5.50MB/s]

for epoch in range(opt.n_epochs):for i, (imgs, labels) in enumerate(dataloader):batch_size = imgs.shape[0]# 定义对抗训练的标签valid = Variable(FloatTensor(batch_size, 1).fill_(1.0), requires_grad=False) # 用于真实样本fake = Variable(FloatTensor(batch_size, 1).fill_(0.0), requires_grad=False) # 用于生成样本fake_aux_gt = Variable(LongTensor(batch_size).fill_(opt.num_classes), requires_grad=False) # 用于生成样本的类别标签# 配置输入数据real_imgs = Variable(imgs.type(FloatTensor)) # 真实图像labels = Variable(labels.type(LongTensor)) # 真实类别标签# -----------------# 训练生成器# -----------------optimizer_G.zero_grad()# 采样噪声和类别标签作为生成器的输入z = Variable(FloatTensor(np.random.normal(0, 1, (batch_size, opt.latent_dim))))# 生成一批图像gen_imgs = generator(z)# 计算生成器的损失,衡量生成器欺骗鉴别器的能力validity, _ = discriminator(gen_imgs)g_loss = adversarial_loss(validity, valid)g_loss.backward()optimizer_G.step()# ---------------------# 训练鉴别器# ---------------------optimizer_D.zero_grad()# 真实图像的损失real_pred, real_aux = discriminator(real_imgs)d_real_loss = (adversarial_loss(real_pred, valid) + auxiliary_loss(real_aux, labels)) / 2# 生成图像的损失fake_pred, fake_aux = discriminator(gen_imgs.detach())d_fake_loss = (adversarial_loss(fake_pred, fake) + auxiliary_loss(fake_aux, fake_aux_gt)) / 2# 总的鉴别器损失d_loss = (d_real_loss + d_fake_loss) / 2# 计算鉴别器准确率pred = np.concatenate([real_aux.data.cpu().numpy(), fake_aux.data.cpu().numpy()], axis=0)gt = np.concatenate([labels.data.cpu().numpy(), fake_aux_gt.data.cpu().numpy()], axis=0)d_acc = np.mean(np.argmax(pred, axis=1) == gt)d_loss.backward()optimizer_D.step()batches_done = epoch * len(dataloader) + iif batches_done % opt.sample_interval == 0:save_image(gen_imgs.data[:25], "images/%d.png" % batches_done, nrow=5, normalize=True)print("[Epoch %d/%d] [Batch %d/%d] [D loss: %f, acc: %d%%] [G loss: %f]"% (epoch, opt.n_epochs, i, len(dataloader), d_loss.item(), 100 * d_acc, g_loss.item()))[Epoch 0/50] [Batch 937/938] [D loss: 1.362414, acc: 50%] [G loss: 0.707926] [Epoch 1/50] [Batch 937/938] [D loss: 1.360016, acc: 50%] [G loss: 0.749867] [Epoch 2/50] [Batch 937/938] [D loss: 1.345859, acc: 50%] [G loss: 0.901844] [Epoch 3/50] [Batch 937/938] [D loss: 1.309943, acc: 50%] [G loss: 0.653252] [Epoch 4/50] [Batch 937/938] [D loss: 1.340662, acc: 50%] [G loss: 0.742371] [Epoch 5/50] [Batch 937/938] [D loss: 1.354004, acc: 50%] [G loss: 0.573718] [Epoch 6/50] [Batch 937/938] [D loss: 1.326664, acc: 51%] [G loss: 0.729521] [Epoch 7/50] [Batch 937/938] [D loss: 1.340284, acc: 48%] [G loss: 0.893304] [Epoch 8/50] [Batch 937/938] [D loss: 1.297407, acc: 53%] [G loss: 0.512779] [Epoch 9/50] [Batch 937/938] [D loss: 1.244959, acc: 51%] [G loss: 0.867025] [Epoch 10/50] [Batch 937/938] [D loss: 1.409401, acc: 50%] [G loss: 0.626929] [Epoch 11/50] [Batch 937/938] [D loss: 1.233004, acc: 53%] [G loss: 0.711049] [Epoch 12/50] [Batch 937/938] [D loss: 1.339882, acc: 56%] [G loss: 0.841943] [Epoch 13/50] [Batch 937/938] [D loss: 1.499406, acc: 50%] [G loss: 0.580588] [Epoch 14/50] [Batch 937/938] [D loss: 1.242574, acc: 53%] [G loss: 1.458132] [Epoch 15/50] [Batch 937/938] [D loss: 1.357433, acc: 54%] [G loss: 0.773089] [Epoch 16/50] [Batch 937/938] [D loss: 1.319705, acc: 53%] [G loss: 0.728283] [Epoch 17/50] [Batch 937/938] [D loss: 1.368955, acc: 46%] [G loss: 1.020731] [Epoch 18/50] [Batch 937/938] [D loss: 1.383472, acc: 51%] [G loss: 0.933235] [Epoch 19/50] [Batch 937/938] [D loss: 1.593254, acc: 54%] [G loss: 0.380877] [Epoch 20/50] [Batch 937/938] [D loss: 1.242541, acc: 50%] [G loss: 0.761861] [Epoch 21/50] [Batch 937/938] [D loss: 1.301212, acc: 62%] [G loss: 0.558420] [Epoch 22/50] [Batch 937/938] [D loss: 1.324697, acc: 53%] [G loss: 1.663191] [Epoch 23/50] [Batch 937/938] [D loss: 1.350422, acc: 60%] [G loss: 0.750597] [Epoch 24/50] [Batch 937/938] [D loss: 1.246442, acc: 56%] [G loss: 0.938523] [Epoch 25/50] [Batch 937/938] [D loss: 1.165384, acc: 57%] [G loss: 0.945472] [Epoch 26/50] [Batch 937/938] [D loss: 1.318104, acc: 51%] [G loss: 0.943989] [Epoch 27/50] [Batch 937/938] [D loss: 1.369688, acc: 51%] [G loss: 1.045065] [Epoch 28/50] [Batch 937/938] [D loss: 1.241795, acc: 53%] [G loss: 0.753452] [Epoch 29/50] [Batch 937/938] [D loss: 1.245152, acc: 59%] [G loss: 1.388610] [Epoch 30/50] [Batch 937/938] [D loss: 1.415710, acc: 54%] [G loss: 1.065790] [Epoch 31/50] [Batch 937/938] [D loss: 1.261829, acc: 56%] [G loss: 0.598533] [Epoch 32/50] [Batch 937/938] [D loss: 1.087898, acc: 71%] [G loss: 1.146997] [Epoch 33/50] [Batch 937/938] [D loss: 1.199838, acc: 53%] [G loss: 1.855803] [Epoch 34/50] [Batch 937/938] [D loss: 1.146974, acc: 56%] [G loss: 0.522069] [Epoch 35/50] [Batch 937/938] [D loss: 1.228530, acc: 57%] [G loss: 2.065096] [Epoch 36/50] [Batch 937/938] [D loss: 1.220076, acc: 57%] [G loss: 1.654905] [Epoch 37/50] [Batch 937/938] [D loss: 1.130150, acc: 62%] [G loss: 0.764476] [Epoch 38/50] [Batch 937/938] [D loss: 1.200049, acc: 62%] [G loss: 0.843963] [Epoch 39/50] [Batch 937/938] [D loss: 1.236222, acc: 62%] [G loss: 1.258294] [Epoch 40/50] [Batch 937/938] [D loss: 1.133929, acc: 64%] [G loss: 0.937561] [Epoch 41/50] [Batch 937/938] [D loss: 1.131473, acc: 73%] [G loss: 1.858397] [Epoch 42/50] [Batch 937/938] [D loss: 1.208524, acc: 67%] [G loss: 2.926470] [Epoch 43/50] [Batch 937/938] [D loss: 1.054991, acc: 70%] [G loss: 2.653653] [Epoch 44/50] [Batch 937/938] [D loss: 1.598110, acc: 37%] [G loss: 0.824140] [Epoch 45/50] [Batch 937/938] [D loss: 1.037547, acc: 76%] [G loss: 1.001997] [Epoch 46/50] [Batch 937/938] [D loss: 1.346323, acc: 57%] [G loss: 0.364864] [Epoch 47/50] [Batch 937/938] [D loss: 1.138918, acc: 71%] [G loss: 0.415268] [Epoch 48/50] [Batch 937/938] [D loss: 0.977577, acc: 81%] [G loss: 2.182211] [Epoch 49/50] [Batch 937/938] [D loss: 1.880296, acc: 42%] [G loss: 1.574159]

收获:未寻找到MNIST数据集,采用其他数据集进行测试,实验结果一般,后续会继续学习相关知识,寻找原因