WidowX-250s 机械臂的简单数字孪生案例

前面一段时间记录了一下WidowX-250s机械臂的学习与遥操作演示,相关链接如下:

WidowX-250s 机械臂学习记录:

https://blog.csdn.net/qq_54900679/article/details/145556979

WidowX-250s 机械臂遥操作演示记录:

https://blog.csdn.net/qq_54900679/article/details/145578127

接下来进行WidowX-250s 机械臂的数字孪生操作演示,也可以理解为用真机去遥控仿真中的机械臂运动,即Real2Sim!

系统:Ubuntu20.04,ROS1;硬件:1台笔记本、1台机械臂

1.机械臂的launch启动文件配置以及话题读取

因为原先完成了aloha相关项目的配置,所以对于这次的WidowX-250s机械臂的序列号配置,依然保留原先的命名风格。

主动端的机械臂序列号名称定义为:/dev/ttyDXL_master_left

首先需要启动机械臂的launch运行文件,single_real2sim.launch文件内容如下:

<launch><arg name="robot_model_master" default="wx250s"/><arg name="base_link_master" default="base_link"/><arg name="master_node" default="$(find aloha)/config_single/master_modes_left.yaml"/><arg name="launch_driver" default="true"/><arg name="use_sim" default="false"/><arg name="robot" value="master_left"/><include if="$(arg launch_driver)" file="$(find interbotix_xsarm_control)/launch/xsarm_control.launch"><arg name="robot_model" value="$(arg robot_model_master)"/><arg name="robot_name" value="$(arg robot)"/><arg name="base_link_frame" value="$(arg base_link_master)"/><arg name="use_world_frame" value="false"/><arg name="use_rviz" value="false"/><arg name="mode_configs" value="$(arg master_node)"/><arg name="use_sim" value="$(arg use_sim)"/></include><nodename="master_left_transform_broadcaster"pkg="tf2_ros"type="static_transform_publisher"args="0 -0.25 0 0 0 0 /world /$(arg robot)/base_link"/></launch>

launch文件的运行指令:

roslaunch single_real2sim.launch

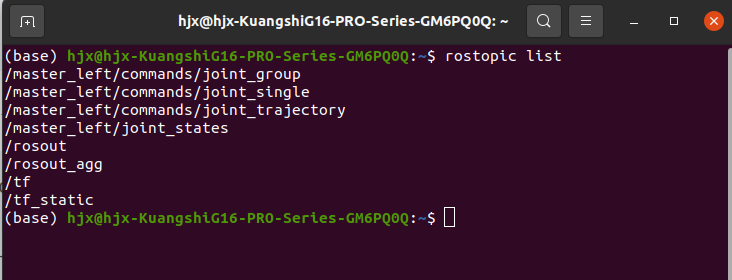

继续重新开启一个终端,运行查看关节话题:

rostopic list

终端会显示:

我们来查看一下/master_left/joint_states:

rostopic echo /master_left/joint_states

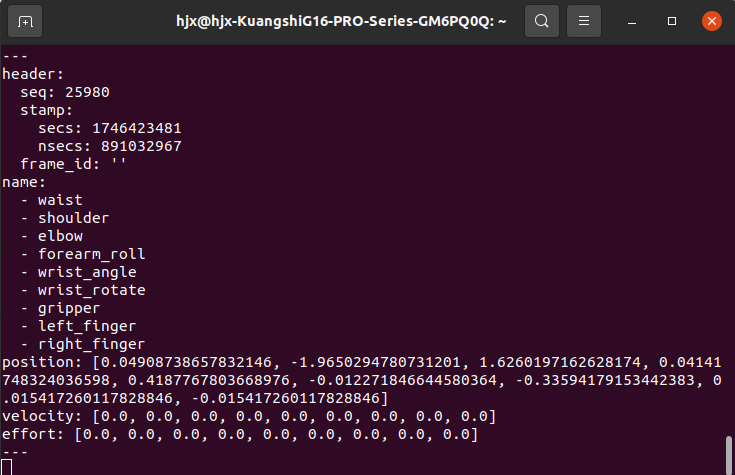

终端会显示如下关节信息的动态变化:

我们需要获取的是其中的position数据列表,将其实时的发送给仿真环境中。

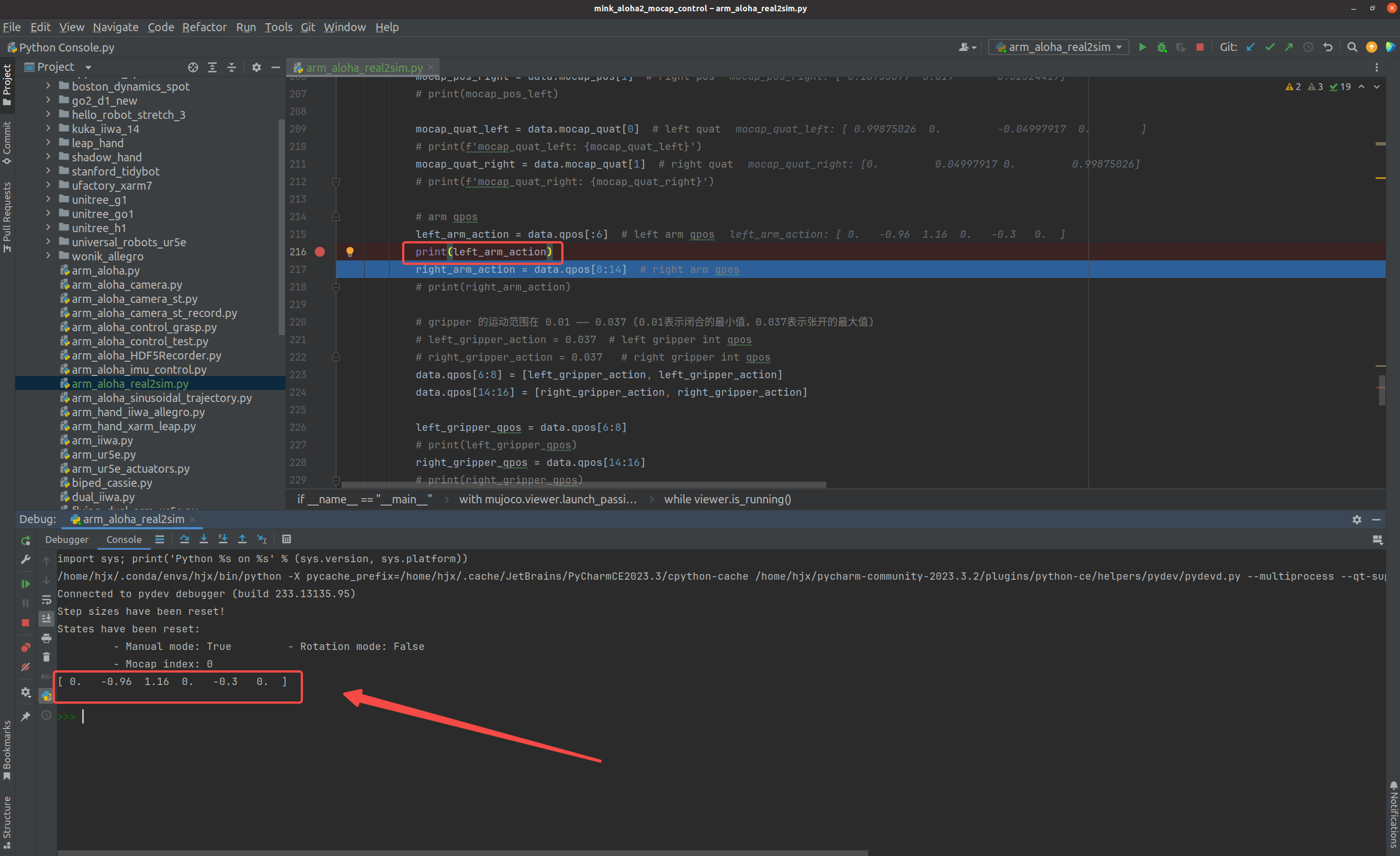

2.Mujoco仿真环境的关节数据读取

下面继续基于Mujoco仿真环境来进行机械臂的关节信息读取,以mink项目的调试为例:

可以看到左臂的6个关节的qpos数据可以获取到(调试中不包含夹爪的qpos,只是用来演示一下),我们要做的就是将这个qpos数据实时替换为真实机械臂的position数据,这样就可以实现真实与仿真的连通了。

3.利用真机遥操作Mujoco仿真中的ARM

好了,下面开始Real2Sim,好戏开始:

定义一个机械臂的回调函数python脚本(arm_aloha_real_recorder.py):

注意:下面只用来测试单臂的遥操作

import numpy as np

import time

# from constants import DT

from interbotix_xs_msgs.msg import JointSingleCommandimport IPython

e = IPython.embed### ALOHA fixed constants

DT = 0.02

FPS = 50class ArmRecorder:def __init__(self, init_node=True, is_debug=False):from collections import dequeimport rospyfrom sensor_msgs.msg import JointStatefrom interbotix_xs_msgs.msg import JointGroupCommand, JointSingleCommandself.secs = Noneself.nsecs = Noneself.qpos = Noneself.effort = Noneself.arm_command = Noneself.gripper_command = Noneself.is_debug = is_debugif init_node:rospy.init_node('recorder', anonymous=True)rospy.Subscriber(f"/master_left/joint_states", JointState, self.puppet_state_cb)rospy.Subscriber(f"/master_left/commands/joint_group", JointGroupCommand, self.puppet_arm_commands_cb)rospy.Subscriber(f"/master_left/commands/joint_single", JointSingleCommand, self.puppet_gripper_commands_cb)if self.is_debug:self.joint_timestamps = deque(maxlen=50)self.arm_command_timestamps = deque(maxlen=50)self.gripper_command_timestamps = deque(maxlen=50)time.sleep(0.1)def puppet_state_cb(self, data):self.qpos = data.positionself.qvel = data.velocityself.effort = data.effortself.data = dataif self.is_debug:self.joint_timestamps.append(time.time())def puppet_arm_commands_cb(self, data):self.arm_command = data.cmdif self.is_debug:self.arm_command_timestamps.append(time.time())def puppet_gripper_commands_cb(self, data):self.gripper_command = data.cmdif self.is_debug:self.gripper_command_timestamps.append(time.time())def print_diagnostics(self):def dt_helper(l):l = np.array(l)diff = l[1:] - l[:-1]return np.mean(diff)joint_freq = 1 / dt_helper(self.joint_timestamps)arm_command_freq = 1 / dt_helper(self.arm_command_timestamps)gripper_command_freq = 1 / dt_helper(self.gripper_command_timestamps)print(f'{joint_freq=:.2f}\n{arm_command_freq=:.2f}\n{gripper_command_freq=:.2f}\n')def get_arm_joint_positions(bot):return bot.arm.core.joint_states.position[:6]def get_arm_gripper_positions(bot):joint_position = bot.gripper.core.joint_states.position[6]return joint_positiondef move_arms(bot_list, target_pose_list, move_time=1):num_steps = int(move_time / DT)curr_pose_list = [get_arm_joint_positions(bot) for bot in bot_list]traj_list = [np.linspace(curr_pose, target_pose, num_steps) for curr_pose, target_pose in zip(curr_pose_list, target_pose_list)]for t in range(num_steps):for bot_id, bot in enumerate(bot_list):bot.arm.set_joint_positions(traj_list[bot_id][t], blocking=False)time.sleep(DT)def move_grippers(bot_list, target_pose_list, move_time):gripper_command = JointSingleCommand(name="gripper")num_steps = int(move_time / DT)curr_pose_list = [get_arm_gripper_positions(bot) for bot in bot_list]traj_list = [np.linspace(curr_pose, target_pose, num_steps) for curr_pose, target_pose in zip(curr_pose_list, target_pose_list)]for t in range(num_steps):for bot_id, bot in enumerate(bot_list):gripper_command.cmd = traj_list[bot_id][t]bot.gripper.core.pub_single.publish(gripper_command)time.sleep(DT)def setup_puppet_bot(bot):bot.dxl.robot_reboot_motors("single", "gripper", True)bot.dxl.robot_set_operating_modes("group", "arm", "position")bot.dxl.robot_set_operating_modes("single", "gripper", "current_based_position")torque_on(bot)def setup_master_bot(bot):bot.dxl.robot_set_operating_modes("group", "arm", "pwm")bot.dxl.robot_set_operating_modes("single", "gripper", "current_based_position")torque_off(bot)def set_standard_pid_gains(bot):bot.dxl.robot_set_motor_registers("group", "arm", 'Position_P_Gain', 800)bot.dxl.robot_set_motor_registers("group", "arm", 'Position_I_Gain', 0)def set_low_pid_gains(bot):bot.dxl.robot_set_motor_registers("group", "arm", 'Position_P_Gain', 100)bot.dxl.robot_set_motor_registers("group", "arm", 'Position_I_Gain', 0)def torque_off(bot):bot.dxl.robot_torque_enable("group", "arm", False)bot.dxl.robot_torque_enable("single", "gripper", False)def torque_on(bot):bot.dxl.robot_torque_enable("group", "arm", True)bot.dxl.robot_torque_enable("single", "gripper", True)def calibrate_linear_vel(base_action, c=None):if c is None:c = 0.v = base_action[..., 0]w = base_action[..., 1]base_action = base_action.copy()base_action[..., 0] = v - c * wreturn base_actiondef smooth_base_action(base_action):return np.stack([np.convolve(base_action[:, i], np.ones(5)/5, mode='same') for i in range(base_action.shape[1])], axis=-1).astype(np.float32)def postprocess_base_action(base_action):linear_vel, angular_vel = base_actionangular_vel *= 0.9return np.array([linear_vel, angular_vel])if __name__ == '__main__':record = ArmRecorder()while True:# joint_position = get_arm_joint_positions()joint_position = record.qposprint(f"\nJoint Position:")# print(f" position: {joint_position:.6f}")print(joint_position)# time.sleep(0.5)数字孪生的real2sim相关的python脚本如下(arm_aloha_real2sim.py):

from pathlib import Path

from typing import Optional, Sequenceimport mujoco

import mujoco.viewer

import numpy as np

from loop_rate_limiters import RateLimiterimport mink

from mink.contrib import TeleopMocapfrom interbotix_xs_modules.arm import InterbotixManipulatorXS

from interbotix_xs_msgs.msg import JointSingleCommandfrom arm_aloha_real_recorder import ArmRecorder_HERE = Path(__file__).parent

_XML = _HERE / "aloha" / "scene.xml"# Single arm joint names.

_JOINT_NAMES = ["waist","shoulder","elbow","forearm_roll","wrist_angle","wrist_rotate",

]# Single arm velocity limits, taken from:

# https://github.com/Interbotix/interbotix_ros_manipulators/blob/main/interbotix_ros_xsarms/interbotix_xsarm_descriptions/urdf/vx300s.urdf.xacro

_VELOCITY_LIMITS = {k: np.pi for k in _JOINT_NAMES}def compensate_gravity(model: mujoco.MjModel,data: mujoco.MjData,subtree_ids: Sequence[int],qfrc_applied: Optional[np.ndarray] = None,

) -> None:"""Compute forces to counteract gravity for the given subtrees.Args:model: Mujoco model.data: Mujoco data.subtree_ids: List of subtree ids. A subtree is defined as the kinematic treestarting at the body and including all its descendants. Gravitycompensation forces will be applied to all bodies in the subtree.qfrc_applied: Optional array to store the computed forces. If not provided,the applied forces in `data` are used."""qfrc_applied = data.qfrc_applied if qfrc_applied is None else qfrc_appliedqfrc_applied[:] = 0.0 # Don't accumulate from previous calls.jac = np.empty((3, model.nv))for subtree_id in subtree_ids:total_mass = model.body_subtreemass[subtree_id]mujoco.mj_jacSubtreeCom(model, data, jac, subtree_id)qfrc_applied[:] -= model.opt.gravity * total_mass @ jacif __name__ == "__main__":model = mujoco.MjModel.from_xml_path(str(_XML))data = mujoco.MjData(model)# Bodies for which to apply gravity compensation.left_subtree_id = model.body("left/base_link").idright_subtree_id = model.body("right/base_link").id# Get the dof and actuator ids for the joints we wish to control.joint_names: list[str] = []velocity_limits: dict[str, float] = {}for prefix in ["left", "right"]:for n in _JOINT_NAMES:name = f"{prefix}/{n}"joint_names.append(name)velocity_limits[name] = _VELOCITY_LIMITS[n]dof_ids = np.array([model.joint(name).id for name in joint_names])actuator_ids = np.array([model.actuator(name).id for name in joint_names])configuration = mink.Configuration(model)tasks = [l_ee_task := mink.FrameTask(frame_name="left/gripper",frame_type="site",position_cost=1.0,orientation_cost=1.0,lm_damping=1.0,),r_ee_task := mink.FrameTask(frame_name="right/gripper",frame_type="site",position_cost=1.0,orientation_cost=1.0,lm_damping=1.0,),posture_task := mink.PostureTask(model, cost=1e-4),]# Enable collision avoidance between the following geoms.l_wrist_geoms = mink.get_subtree_geom_ids(model, model.body("left/wrist_link").id)r_wrist_geoms = mink.get_subtree_geom_ids(model, model.body("right/wrist_link").id)l_geoms = mink.get_subtree_geom_ids(model, model.body("left/upper_arm_link").id)r_geoms = mink.get_subtree_geom_ids(model, model.body("right/upper_arm_link").id)frame_geoms = mink.get_body_geom_ids(model, model.body("metal_frame").id)collision_pairs = [(l_wrist_geoms, r_wrist_geoms),(l_geoms + r_geoms, frame_geoms + ["table"]),]collision_avoidance_limit = mink.CollisionAvoidanceLimit(model=model,geom_pairs=collision_pairs, # type: ignoreminimum_distance_from_collisions=0.05,collision_detection_distance=0.1,)limits = [mink.ConfigurationLimit(model=model),mink.VelocityLimit(model, velocity_limits),collision_avoidance_limit,]l_mid = model.body("left/target").mocapid[0]r_mid = model.body("right/target").mocapid[0]solver = "quadprog"pos_threshold = 5e-3ori_threshold = 5e-3max_iters = 5# Initialize key_callback function.key_callback = TeleopMocap(data)with mujoco.viewer.launch_passive(model=model,data=data,show_left_ui=False,show_right_ui=False,key_callback=key_callback,) as viewer:mujoco.mjv_defaultFreeCamera(model, viewer.cam)# Initialize to the home keyframe.mujoco.mj_resetDataKeyframe(model, data, model.key("neutral_pose").id)configuration.update(data.qpos)mujoco.mj_forward(model, data)posture_task.set_target_from_configuration(configuration)# Initialize mocap targets at the end-effector site.mink.move_mocap_to_frame(model, data, "left/target", "left/gripper", "site")mink.move_mocap_to_frame(model, data, "right/target", "right/gripper", "site")rate = RateLimiter(frequency=200.0, warn=False)# 在主循环外定义时间计数器和阶段标志time_step = 0left_arm_moving = Trueright_arm_moving = Falseleft_gripper_moving = Falseright_gripper_moving = Falseleft_gripper_action = 0.037 # left gripper int qposright_gripper_action = 0.037 # right gripper int qposwhile viewer.is_running():# Update task targets.l_ee_task.set_target(mink.SE3.from_mocap_name(model, data, "left/target"))r_ee_task.set_target(mink.SE3.from_mocap_name(model, data, "right/target"))# Continuously check for autonomous key movement.key_callback.auto_key_move()# Compute velocity and integrate into the next configuration.for i in range(max_iters):vel = mink.solve_ik(configuration,tasks,rate.dt,solver,limits=limits,damping=1e-5,)configuration.integrate_inplace(vel, rate.dt)l_err = l_ee_task.compute_error(configuration)l_pos_achieved = np.linalg.norm(l_err[:3]) <= pos_thresholdl_ori_achieved = np.linalg.norm(l_err[3:]) <= ori_thresholdr_err = r_ee_task.compute_error(configuration)r_pos_achieved = np.linalg.norm(r_err[:3]) <= pos_thresholdr_ori_achieved = np.linalg.norm(r_err[3:]) <= ori_thresholdif (l_pos_achievedand l_ori_achievedand r_pos_achievedand r_ori_achieved):breakdata.ctrl[actuator_ids] = configuration.q[dof_ids]compensate_gravity(model, data, [left_subtree_id, right_subtree_id])# HJX: mocap control# 定义分阶段的时间控制# data.mocap_pos[0] = [-0.18753877, -0.019, 0.32524417] # left init pos# data.mocap_pos[1] = [0.18753877, -0.019, 0.32524417] # right init posmocap_pos_left = data.mocap_pos[0] # left posmocap_pos_right = data.mocap_pos[1] # right pos# print(mocap_pos_left)mocap_quat_left = data.mocap_quat[0] # left quat# print(f'mocap_quat_left: {mocap_quat_left}')mocap_quat_right = data.mocap_quat[1] # right quat# print(f'mocap_quat_right: {mocap_quat_right}')# arm qposleft_arm_action = data.qpos[:6] # left arm qpos# print(left_arm_action)right_arm_action = data.qpos[8:14] # right arm qpos# print(right_arm_action)# gripper 的运动范围在 0.01 —— 0.037 (0.01表示闭合的最小值,0.037表示张开的最大值)# left_gripper_action = 0.037 # left gripper int qpos# right_gripper_action = 0.037 # right gripper int qposdata.qpos[6:8] = [left_gripper_action, left_gripper_action]data.qpos[14:16] = [right_gripper_action, right_gripper_action]left_gripper_qpos = data.qpos[6:8]# print(left_gripper_qpos)right_gripper_qpos = data.qpos[14:16]# print(right_gripper_qpos)record = ArmRecorder()arm_joint_position = record.qpos[:6]# print(arm_joint_position)gripper_joint_position = record.qpos[7:8] # gripper# --- 左臂运动阶段 ---if left_arm_moving:left_arm_action = arm_joint_positionleft_gripper_qpos = gripper_joint_position# 左臂运动完成后切换阶段if time_step >= 100:left_arm_moving = Trueright_arm_moving = Truetime_step = 0 # 重置计时器# # --- 右臂运动阶段 ---# elif right_arm_moving:# # 随时间步线性移动右臂# # mocap_pos_right[0] -= 0.001# # mocap_pos_right[1] -= 0.001# # mocap_pos_right[2] -= 0.001# mocap_pos_right -= 0.001## if time_step >= 200:# left_arm_moving = False# right_arm_moving = False# left_gripper_moving = True# right_gripper_moving = True# time_step = 0 # 重置计时器# 应用更新后的 Mocap 位置data.mocap_pos[0] = mocap_pos_leftdata.mocap_pos[1] = mocap_pos_right# 应用更新后的 Mocap 姿态data.mocap_quat[0] = mocap_quat_leftdata.mocap_quat[1] = mocap_quat_right# 应用更新后的 arm qposdata.qpos[:6] = left_arm_action # left arm qposdata.qpos[8:14] = right_arm_action # right arm qposdata.qpos[6:8] = left_gripper_qpos # left gripper qposdata.qpos[14:16] = right_gripper_qpos # right gripper qpos# 物理仿真步进mujoco.mj_step(model, data)# Visualize at fixed FPS.viewer.sync()rate.sleep()# 更新计时器time_step += 1

运行arm_aloha_real2sim.py代码,效果如下:

可以看到,机械臂的动作和真实世界中的机械臂保持一致了,成功!

后续的研究就可以用真实机械臂来采集仿真环境中的操作数据了,over~~~

创作不易,感谢您的点赞与关注~~~