R8周:RNN实现阿尔茨海默病诊断

- 🍨 本文为🔗365天深度学习训练营中的学习记录博客

- 🍖 原作者:K同学啊

一、前期准备

1.设置GPU

import numpy as np

import pandas as pd

import torch

from torch import nn

import torch.nn as nn

import torch.nn.functional as F

import seaborn as sns#设置硬件设备,如果有GPU则使用,没有则使用cpu

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

devicedevice(type='cuda')

2.数据导入

df = pd.read_csv("F:/jupyter lab/DL-100-days/datasets/alzheimers_dig/alzheimers_disease_data.csv")

# 删除最后一列和第一列

df = df.iloc[:, 1:-1]

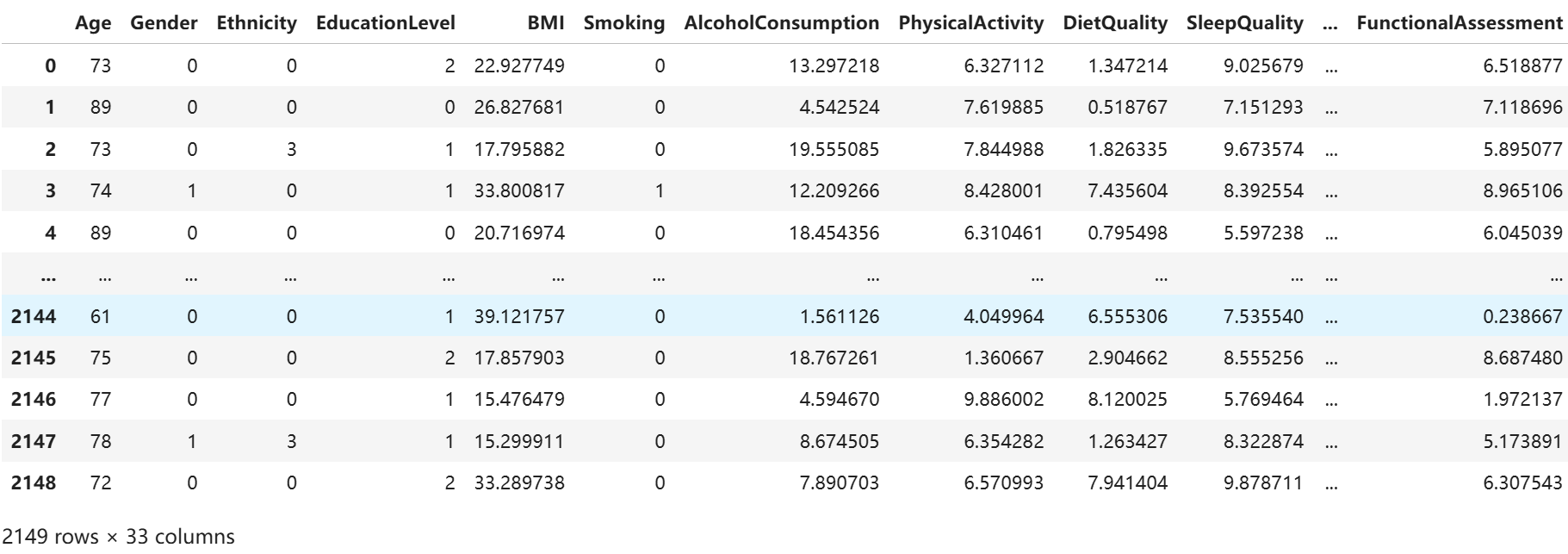

df

二、数据分析

1.标准化

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScalerX = df.iloc[:, :-1]

y = df.iloc[:, -1]# 将每一列特征标准化为标准正态分布,注意,标准化是针对每一列而言的

scaler = StandardScaler()

X = scaler.fit_transform(X)2. 划分数据集

X = torch.tensor(np.array(X), dtype=torch.float32)

y = torch.tensor(np.array(y), dtype=torch.int64)X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.1, random_state=1)X_train.shape, y_train.shape(torch.Size([1934, 32]), torch.Size([1934]))

3.构建数据加载器

from torch.utils.data import TensorDataset, DataLoadertrain_dl = DataLoader(TensorDataset(X_train, y_train), batch_size=32, shuffle=False)

test_dl = DataLoader(TensorDataset(X_test, y_test), batch_size=32, shuffle=False)三、训练模型

1.构建模型

class model_rnn(nn.Module):def __init__(self):super(model_rnn, self).__init__()self.rnn0 = nn.RNN(input_size=32, hidden_size=200, num_layers=1, batch_first=True)self.fc0 = nn.Linear(200, 50)self.fc1 = nn.Linear(50, 2)def forward(self, x):# 如果 x 是 2D 的,转换为 3D 张量,假设 seq_len=1if x.dim() == 2:x = x.unsqueeze(1) # [batch_size, 1, input_size]# RNN 处理数据out, h_n = self.rnn0(x) # 第一层 RNN# out 维度: [batch_size, seq_len, hidden_size]# 过 fc0 是线性层out = self.fc0(out) # [batch_size, seq_len, 50]# 获取最后一个时间步的输出out = out[:, -1, :] # 选择序列的最后一个时间步的输出 [batch_size, 50]out = self.fc1(out) # [batch_size, 2]return outmodel = model_rnn().to(device)

modelmodel_rnn((rnn0): RNN(32, 200, batch_first=True)(fc0): Linear(in_features=200, out_features=50, bias=True)(fc1): Linear(in_features=50, out_features=2, bias=True) )

2.定义训练函数

# 训练循环

def train(dataloader, model, loss_fn, optimizer):size = len(dataloader.dataset) # 训练集的大小num_batches = len(dataloader) # 批次数目, (size/batch_size,向上取整)train_loss, train_acc = 0, 0 # 初始化训练损失和正确率for X, y in dataloader: # 获取图片及其标签X, y = X.to(device), y.to(device)# 计算预测误差pred = model(X) # 网络输出loss = loss_fn(pred, y) # 计算网络输出和真实值之间的差距,targets为真实值,计算二者差值即为损失# 反向传播optimizer.zero_grad() # grad属性归零loss.backward() # 反向传播optimizer.step() # 每一步自动更新# 记录acc与losstrain_acc += (pred.argmax(1) == y).type(torch.float).sum().item()train_loss += loss.item()train_acc /= sizetrain_loss /= num_batchesreturn train_acc, train_loss3.定义测试函数

def test (dataloader, model, loss_fn):size = len(dataloader.dataset) # 测试集的大小num_batches = len(dataloader) # 批次数目, (size/batch_size,向上取整)test_loss, test_acc = 0, 0# 当不进行训练时,停止梯度更新,节省计算内存消耗with torch.no_grad():for imgs, target in dataloader:imgs, target = imgs.to(device), target.to(device)# 计算losstarget_pred = model(imgs)loss = loss_fn(target_pred, target)test_loss += loss.item()test_acc += (target_pred.argmax(1) == target).type(torch.float).sum().item()test_acc /= sizetest_loss /= num_batchesreturn test_acc, test_loss4.训练模型

loss_fn = nn.CrossEntropyLoss() # 创建损失函数

learn_rate = 5e-5

opt = torch.optim.Adam(model.parameters(), lr= learn_rate)epochs = 50train_loss = []

train_acc = []

test_loss = []

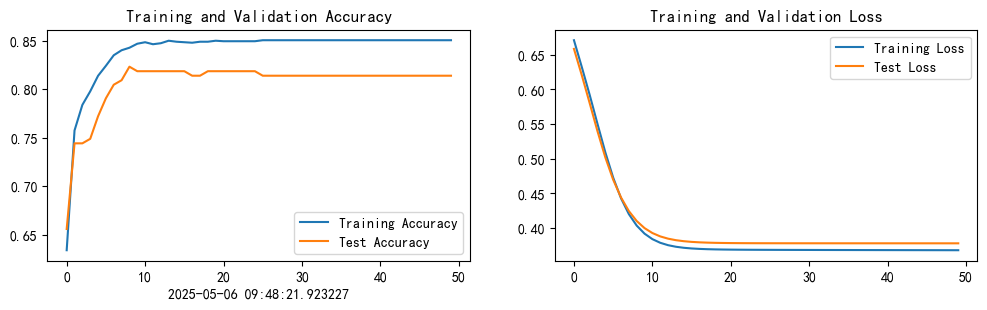

test_acc = []for epoch in range(epochs):model.train()epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fn, opt)model.eval()epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fn)train_acc.append(epoch_train_acc)train_loss.append(epoch_train_loss)test_acc.append(epoch_test_acc)test_loss.append(epoch_test_loss)# 获取当前的学习率lr = opt.state_dict()['param_groups'][0]['lr']template = ('Epoch:{:2d}, Train_acc:{:.1f}%, Train_loss:{:.3f}, Test_acc:{:.1f}%, Test_loss:{:.3f}, Lr:{:.2E}')print(template.format(epoch+1, epoch_train_acc*100, epoch_train_loss,epoch_test_acc*100, epoch_test_loss, lr))print('Done')Epoch: 1, Train_acc:63.4%, Train_loss:0.671, Test_acc:65.6%, Test_loss:0.659, Lr:5.00E-05 Epoch: 2, Train_acc:75.7%, Train_loss:0.632, Test_acc:74.4%, Test_loss:0.621, Lr:5.00E-05 Epoch: 3, Train_acc:78.4%, Train_loss:0.592, Test_acc:74.4%, Test_loss:0.580, Lr:5.00E-05 Epoch: 4, Train_acc:79.8%, Train_loss:0.550, Test_acc:74.9%, Test_loss:0.540, Lr:5.00E-05 Epoch: 5, Train_acc:81.4%, Train_loss:0.509, Test_acc:77.2%, Test_loss:0.502, Lr:5.00E-05 ..........

Epoch:46, Train_acc:85.1%, Train_loss:0.368, Test_acc:81.4%, Test_loss:0.378, Lr:5.00E-05 Epoch:47, Train_acc:85.1%, Train_loss:0.368, Test_acc:81.4%, Test_loss:0.378, Lr:5.00E-05 Epoch:48, Train_acc:85.1%, Train_loss:0.368, Test_acc:81.4%, Test_loss:0.378, Lr:5.00E-05 Epoch:49, Train_acc:85.1%, Train_loss:0.368, Test_acc:81.4%, Test_loss:0.378, Lr:5.00E-05 Epoch:50, Train_acc:85.1%, Train_loss:0.368, Test_acc:81.4%, Test_loss:0.378, Lr:5.00E-05 ==================== Done ====================

四、模型评估

1.Loss与Accuracy图

import matplotlib.pyplot as plt

#隐藏警告

import warnings

warnings.filterwarnings("ignore") #忽略警告信息

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

plt.rcParams['figure.dpi'] = 100 #分辨率from datetime import datetime

current_time = datetime.now()epochs_range = range(epochs)plt.figure(figsize=(12, 3))

plt.subplot(1, 2, 1)plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.xlabel(current_time)plt.subplot(1, 2, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

print("============输入数据shape为==============")

print("X_test.shape:",X_test.shape)

print("y_test.shape:",y_test.shape)pred = model(X_test.to(device)).argmax(1).cpu().numpy()print("\n==========输出数据Shape为=============")

print("pred.shape:",pred.shape)============输入数据shape为============== X_test.shape: torch.Size([215, 32]) y_test.shape: torch.Size([215])==========输出数据Shape为============= pred.shape: (215,)

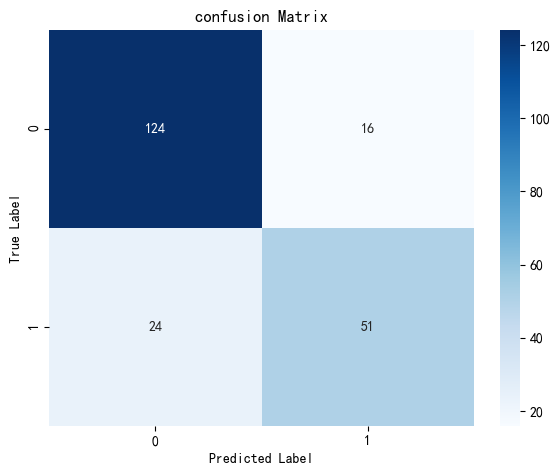

2.混淆矩阵

import numpy as np

import matplotlib.pyplot as plt

from sklearn.metrics import confusion_matrix, ConfusionMatrixDisplay#计算混淆矩阵

cm = confusion_matrix(y_test,pred)plt.figure(figsize=(6,5))

plt.suptitle('')

sns.heatmap(cm,annot=True,fmt="d",cmap="Blues")#修改字体大小

plt.xticks(fontsize=10)

plt.yticks(fontsize=10)

plt.title("confusion Matrix",fontsize=12)

plt.xlabel("Predicted Label",fontsize=10)

plt.ylabel("True Label",fontsize=10)#显示图

plt.tight_layout() #调整布局防止重叠

plt.show()

3.调用模型进行预测

test_X = X_test[0].reshape(1,-1) # X_test[0]即我们的输入数据pred = model(test_X.to(device)).argmax(1).item()

print("模型预测结果为:",pred)

print("=="*20)

print("0:未患病")

print("1:已患病")模型预测结果为: 0 ======================================== 0:未患病 1:已患病

五、学习心得

1.本周使用RNN开展了阿尔兹海默症预测,使用阿尔兹海默症诊断状态0/1表示,同时加入混淆矩阵。

2.RNN与LSTM相比较,RNN的参数较少,计算量小;而LSTM的参数相对较多,时间长,但是记忆力保持的比较好。