1.从零开始实现LSTM

#从零开始实现长短期记忆网络

import torch

from torch import nn

from d2l import torch as d2l#加载时光机器数据集

batch_size,num_steps = 32,35

train_iter,vocab = d2l.load_data_time_machine(batch_size,num_steps)#1.定义和初始化模型参数:

#超参数num_hiddens定义隐藏单元的数量。按照标准差0.01的高斯分布初始化权重,并将偏置项设为0。

def get_lstm_params(vocab_size,num_hiddens,device):num_inputs = num_outputs = vocab_sizedef normal(shape):return torch.randn(size=shape,device=device)*0.01def three():return (normal((num_inputs,num_hiddens)),normal((num_hiddens,num_hiddens)),torch.zeros(num_hiddens,device=device))W_xi,W_hi,b_i = three() #输入门参数W_xf,W_hf,b_f = three() #遗忘门参数W_xo,W_ho,b_o = three() #输出门参数W_xc,W_hc,b_c = three() #候选记忆元参数#输出层参数W_hq = normal((num_hiddens,num_outputs))b_q = torch.zeros(num_outputs,device=device)#附加梯度params = [W_xi,W_hi,b_i,W_xf,W_hf,b_f,W_xo,W_ho,b_o,W_xc,W_hc,b_c,W_hq,b_q]for param in params:param.requires_grad_(True)return params

#2.定义模型

#在初始化函数中,长短期记忆网络的隐状态需要返回一个额外的记忆元,单元的值为0,形状为(批量大小,隐藏单元数)

def init_lstm_state(batch_size,num_hiddens,device):return (torch.zeros((batch_size,num_hiddens),device=device),torch.zeros((batch_size,num_hiddens),device=device))

#实际模型的定义与前面讨论的一样:提供三个门和一个额外的记忆元。

#只有隐状态才会传递到输出层,而记忆元mathbf{C}_t不直接参与输出计算。

def lstm(inputs,state,params):[W_xi,W_hi,b_i,W_xf,W_hf,b_f,W_xo,W_ho,b_o,W_xc,W_hc,b_c,W_hq,b_q] = params(H,C) = stateoutputs = []for X in inputs:I = torch.sigmoid((X @ W_xi) + (H @ W_hi) + b_i)F = torch.sigmoid((X @ W_xf) + (H @ W_hf) + b_f)O = torch.sigmoid((X @ W_xo) + (H @ W_ho) + b_o)C_tilda = torch.tanh((X @ W_xc) + (H @ W_hc) + b_c)C = F * C + I * C_tildaH = O * torch.tanh(C)Y = (H @ W_hq) + b_qoutputs.append(Y)return torch.cat(outputs,dim=0),(H,C)

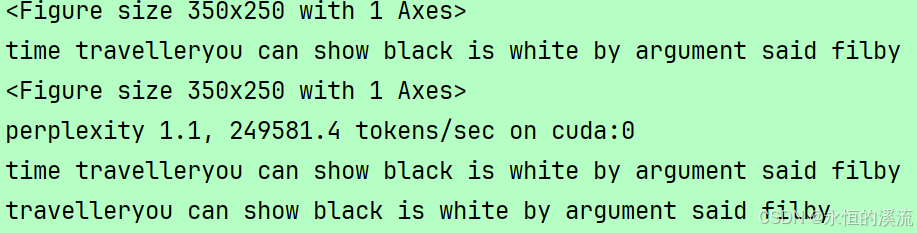

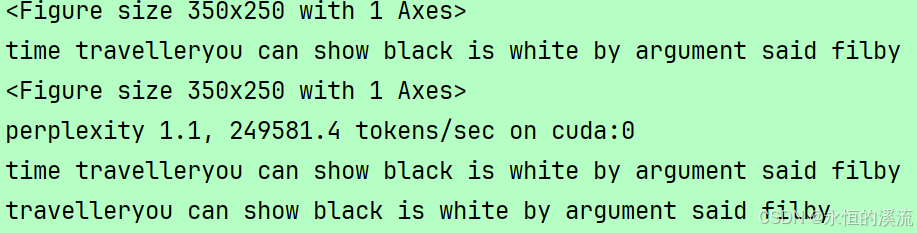

#3.训练和预测

vocab_size,num_hiddens,device = len(vocab),256,d2l.try_gpu()

num_epochs,lr = 500,1

model = d2l.RNNModelScratch(len(vocab),num_hiddens,device,get_lstm_params,init_lstm_state,lstm)

print(d2l.train_ch8(model,train_iter,vocab,lr,num_epochs,device))

d2l.plt.show()

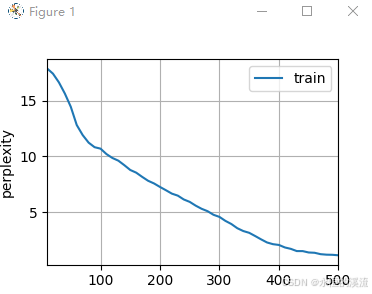

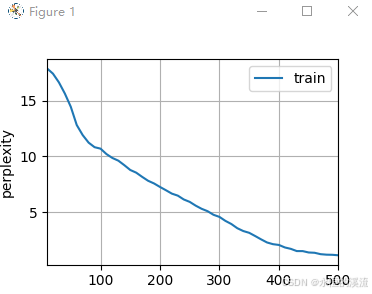

2.简洁实现LSTM

#简洁实现长短期记忆网络

import torch

from torch import nn

from d2l import torch as d2l#加载时光机器数据集

batch_size,num_steps = 32,35

train_iter,vocab = d2l.load_data_time_machine(batch_size,num_steps)vocab_size,num_hiddens,device = len(vocab),256,d2l.try_gpu()

num_epochs,lr = 500,1num_inputs = vocab_size

lstm_layer = nn.LSTM(num_inputs,num_hiddens)

model = d2l.RNNModel(lstm_layer,len(vocab))

model = model.to(device)

print(d2l.train_ch8(model,train_iter,vocab,lr,num_epochs,device))

d2l.plt.show()